When he goes through more cases and examples, he realizes sometimes certain border can be blur (less certain, higher loss), even though he can make better decisions (more accuracy). When someone started to learn a technique, he is told exactly what is good or bad, what is certain things for (high certainty).

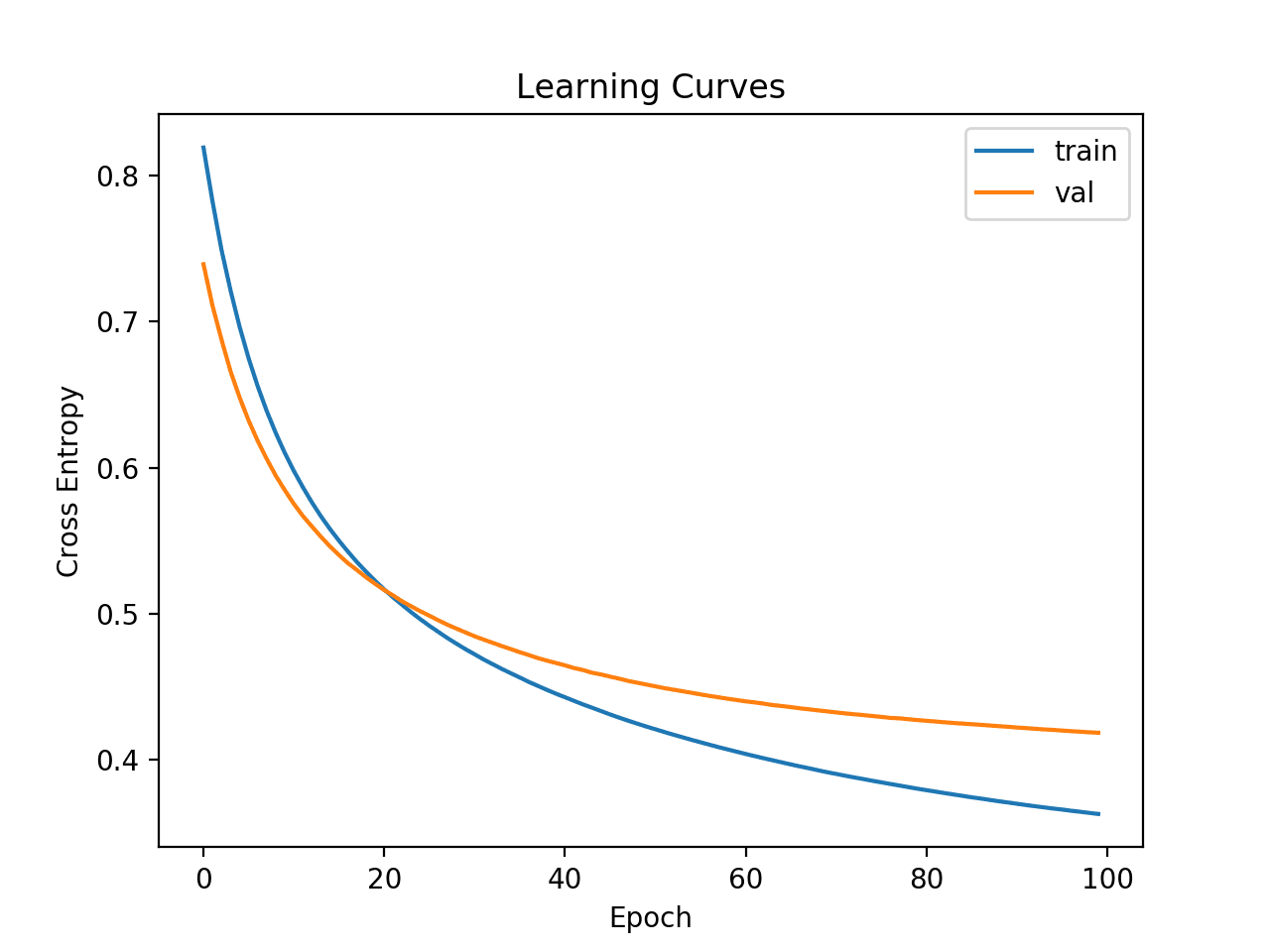

This is a case where the model is less certain about certain things as being trained longer.Experiment with more and larger hidden layers. The model doesn't have enough aspect of information to be certain.Check whether these sample are correctly labelled. Compare the false predictions when val_loss is minimum and val_acc is maximum. Hopefully it can help explain this problem. And suggest some experiments to verify them. And they cannot suggest how to digger further to be more clear. But they don't explain why it becomes so. Many answers focus on the mathematical calculation explaining how is this possible. I sadly have no answer for whether or not this "overfitting" is a bad thing in this case: should we stop the learning once the network is starting to learn spurious patterns, even though it's continuing to learn useful ones along the way?įinally, I think this effect can be further obscured in the case of multi-class classification, where the network at a given epoch might be severely overfit on some classes but still learning on others. However, it is at the same time still learning some patterns which are useful for generalization (phenomenon one, "good learning") as more and more images are being correctly classified (image C, and also images A and B in the figure). The network is starting to learn patterns only relevant for the training set and not great for generalization, leading to phenomenon 2, some images from the validation set get predicted really wrong (image C in the figure), with an effect amplified by the "loss asymetry". So I think that when both accuracy and loss are increasing, the network is starting to overfit, and both phenomena are happening at the same time. (Getting increasing loss and stable accuracy could also be caused by good predictions being classified a little worse, but I find it less likely because of this loss "asymetry"). See this answer for further illustration of this phenomenon. For a cat image (ground truth : 1), the loss is $log(output)$, so even if many cat images are correctly predicted (eg images A and B in the figure, contributing almost nothing to the mean loss), a single misclassified cat image will have a high loss, hence "blowing up" your mean loss. Note that when one uses cross-entropy loss for classification as it is usually done, bad predictions are penalized much more strongly than good predictions are rewarded. This leads to a less classic " loss increases while accuracy stays the same". Some images with very bad predictions keep getting worse (image D in the figure). This is the classic " loss decreases while accuracy increases" behavior that we expect when training is going well. Some images with borderline predictions get predicted better and so their output class changes (image C in the figure). I believe that in this case, two phenomenons are happening at the same time. Let's consider the case of binary classification, where the task is to predict whether an image is a cat or a dog, and the output of the network is a sigmoid (outputting a float between 0 and 1), where we train the network to output 1 if the image is one of a cat and 0 otherwise. I stress that this answer is therefore purely based on experimental data I encountered, and there may be other reasons for OP's case. I have myself encountered this case several times, and I present here my conclusions based on the analysis I had conducted at the time. However, accuracy and loss intuitively seem to be somewhat (inversely) correlated, as better predictions should lead to lower loss and higher accuracy, and the case of higher loss and higher accuracy shown by OP is surprising. So if raw outputs change, loss changes but accuracy is more "resilient" as outputs need to go over/under a threshold to actually change accuracy. Other answers explain well how accuracy and loss are not necessarily exactly (inversely) correlated, as loss measures a difference between raw output (float) and a class (0 or 1 in the case of binary classification), while accuracy measures the difference between thresholded output (0 or 1) and class.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed